Compare commits

43 Commits

fix/sync-c

...

content/da

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f6589f9a8c | ||

|

|

5c287d825f | ||

|

|

449e8f12e4 | ||

|

|

a15b13cedd | ||

|

|

609683db2f | ||

|

|

3e21d05767 | ||

|

|

82edfba6e9 | ||

|

|

65d7a737ac | ||

|

|

2e0a69ad72 | ||

|

|

485ffcf755 | ||

|

|

933c568066 | ||

|

|

3b809d9f81 | ||

|

|

12ae7de3c5 | ||

|

|

9316d4027f | ||

|

|

5a63432412 | ||

|

|

ffecb5ae1a | ||

|

|

efd5d20089 | ||

|

|

7a51c1af6c | ||

|

|

6970cccc85 | ||

|

|

78940d44a9 | ||

|

|

6f11403a41 | ||

|

|

214799b0c2 | ||

|

|

b5f564cba4 | ||

|

|

3a4514b9f1 | ||

|

|

df53280ee9 | ||

|

|

487a6a222b | ||

|

|

7933e222ee | ||

|

|

e7b8c033fb | ||

|

|

d893d0fe5d | ||

|

|

1c8571e484 | ||

|

|

3b43ed33c1 | ||

|

|

8a276d8e04 | ||

|

|

36a9e987b5 | ||

|

|

402104665e | ||

|

|

9ec3c1fb9d | ||

|

|

179cefe4da | ||

|

|

93c1ea0496 | ||

|

|

c9ee8b0ee0 | ||

|

|

0d5bce309e | ||

|

|

87a2d493e2 | ||

|

|

4c7daa6a5b | ||

|

|

88ac6406f9 | ||

|

|

374a56eeff |

@@ -3,6 +3,6 @@

|

||||

"enabled": false

|

||||

},

|

||||

"_variables": {

|

||||

"lastUpdateCheck": 1755042938009

|

||||

"lastUpdateCheck": 1756224238932

|

||||

}

|

||||

}

|

||||

7

.github/workflows/sync-content-to-repo.yml

vendored

@@ -50,11 +50,10 @@ jobs:

|

||||

branch: "chore/sync-content-to-repo-${{ inputs.roadmap_slug }}"

|

||||

base: "master"

|

||||

labels: |

|

||||

dependencies

|

||||

automated pr

|

||||

reviewers: arikchakma

|

||||

reviewers: jcanalesluna,kamranahmedse

|

||||

commit-message: "chore: sync content to repo"

|

||||

title: "chore: sync content to repository"

|

||||

title: "chore: sync content to repository - ${{ inputs.roadmap_slug }}"

|

||||

body: |

|

||||

## Sync Content to Repo

|

||||

|

||||

@@ -64,4 +63,4 @@ jobs:

|

||||

> Commit: ${{ github.sha }}

|

||||

> Workflow Path: ${{ github.workflow_ref }}

|

||||

|

||||

**Please Review the Changes and Merge the PR if everything is fine.**

|

||||

**Please Review the Changes and Merge the PR if everything is fine.**

|

||||

|

||||

2

.github/workflows/sync-repo-to-database.yml

vendored

@@ -46,7 +46,7 @@ jobs:

|

||||

echo "$CHANGED_FILES"

|

||||

|

||||

# Convert to space-separated list for the script

|

||||

CHANGED_FILES_LIST=$(echo "$CHANGED_FILES" | tr '\n' ' ')

|

||||

CHANGED_FILES_LIST=$(echo "$CHANGED_FILES" | tr '\n' ',')

|

||||

|

||||

echo "has_changes=true" >> $GITHUB_OUTPUT

|

||||

echo "changed_files=$CHANGED_FILES_LIST" >> $GITHUB_OUTPUT

|

||||

|

||||

@@ -31,6 +31,7 @@

|

||||

"migrate:editor-roadmaps": "tsx ./scripts/migrate-editor-roadmap.ts",

|

||||

"sync:content-to-repo": "tsx ./scripts/sync-content-to-repo.ts",

|

||||

"sync:repo-to-database": "tsx ./scripts/sync-repo-to-database.ts",

|

||||

"migrate:content-repo-to-database": "tsx ./scripts/migrate-content-repo-to-database.ts",

|

||||

"test:e2e": "playwright test"

|

||||

},

|

||||

"dependencies": {

|

||||

|

||||

|

Before Width: | Height: | Size: 351 KiB |

|

Before Width: | Height: | Size: 420 KiB |

|

Before Width: | Height: | Size: 36 KiB |

|

Before Width: | Height: | Size: 34 KiB |

|

Before Width: | Height: | Size: 36 KiB |

|

Before Width: | Height: | Size: 14 KiB |

|

Before Width: | Height: | Size: 126 KiB |

|

Before Width: | Height: | Size: 431 KiB |

|

Before Width: | Height: | Size: 235 KiB |

|

Before Width: | Height: | Size: 205 KiB |

|

Before Width: | Height: | Size: 242 KiB |

|

Before Width: | Height: | Size: 572 KiB |

|

Before Width: | Height: | Size: 283 KiB |

|

Before Width: | Height: | Size: 437 KiB |

|

Before Width: | Height: | Size: 799 KiB |

|

Before Width: | Height: | Size: 233 KiB |

|

Before Width: | Height: | Size: 1.0 MiB |

|

Before Width: | Height: | Size: 756 KiB |

|

Before Width: | Height: | Size: 14 KiB |

|

Before Width: | Height: | Size: 7.7 KiB |

|

Before Width: | Height: | Size: 18 KiB |

|

Before Width: | Height: | Size: 142 KiB |

|

Before Width: | Height: | Size: 1.4 MiB |

|

Before Width: | Height: | Size: 685 KiB |

|

Before Width: | Height: | Size: 128 KiB |

|

Before Width: | Height: | Size: 92 KiB |

|

Before Width: | Height: | Size: 835 KiB |

|

Before Width: | Height: | Size: 602 KiB |

|

Before Width: | Height: | Size: 22 KiB |

|

Before Width: | Height: | Size: 18 KiB |

|

Before Width: | Height: | Size: 16 KiB |

|

Before Width: | Height: | Size: 25 KiB |

|

Before Width: | Height: | Size: 24 KiB |

|

Before Width: | Height: | Size: 16 KiB |

|

Before Width: | Height: | Size: 12 KiB |

|

Before Width: | Height: | Size: 11 KiB |

|

Before Width: | Height: | Size: 11 KiB |

|

Before Width: | Height: | Size: 17 KiB |

|

Before Width: | Height: | Size: 44 KiB |

|

Before Width: | Height: | Size: 26 KiB |

|

Before Width: | Height: | Size: 345 KiB |

|

Before Width: | Height: | Size: 516 KiB |

BIN

public/pdfs/roadmaps/bi-analyst.pdf

Normal file

BIN

public/pdfs/roadmaps/nextjs.pdf

Normal file

BIN

public/roadmaps/bi-analyst.png

Normal file

|

After Width: | Height: | Size: 633 KiB |

BIN

public/roadmaps/nextjs.png

Normal file

|

After Width: | Height: | Size: 326 KiB |

@@ -47,6 +47,7 @@ Here is the list of available roadmaps with more being actively worked upon.

|

||||

- [Linux Roadmap](https://roadmap.sh/linux)

|

||||

- [Terraform Roadmap](https://roadmap.sh/terraform)

|

||||

- [Data Analyst Roadmap](https://roadmap.sh/data-analyst)

|

||||

- [BI Analyst Roadmap](https://roadmap.sh/bi-analyst)

|

||||

- [Data Engineer Roadmap](https://roadmap.sh/data-engineer)

|

||||

- [Machine Learning Roadmap](https://roadmap.sh/machine-learning)

|

||||

- [MLOps Roadmap](https://roadmap.sh/mlops)

|

||||

@@ -61,6 +62,7 @@ Here is the list of available roadmaps with more being actively worked upon.

|

||||

- [TypeScript Roadmap](https://roadmap.sh/typescript)

|

||||

- [C++ Roadmap](https://roadmap.sh/cpp)

|

||||

- [React Roadmap](https://roadmap.sh/react)

|

||||

- [Next.js Roadmap](https://roadmap.sh/nextjs)

|

||||

- [React Native Roadmap](https://roadmap.sh/react-native)

|

||||

- [Vue Roadmap](https://roadmap.sh/vue)

|

||||

- [Angular Roadmap](https://roadmap.sh/angular)

|

||||

|

||||

255

scripts/migrate-content-repo-to-database.ts

Normal file

@@ -0,0 +1,255 @@

|

||||

import fs from 'node:fs/promises';

|

||||

import path from 'node:path';

|

||||

import { fileURLToPath } from 'node:url';

|

||||

import type { OfficialRoadmapDocument } from '../src/queries/official-roadmap';

|

||||

import { parse } from 'node-html-parser';

|

||||

import { markdownToHtml } from '../src/lib/markdown';

|

||||

import { htmlToMarkdown } from '../src/lib/html';

|

||||

import matter from 'gray-matter';

|

||||

import type { RoadmapFrontmatter } from '../src/lib/roadmap';

|

||||

import {

|

||||

allowedOfficialRoadmapTopicResourceType,

|

||||

type AllowedOfficialRoadmapTopicResourceType,

|

||||

type SyncToDatabaseTopicContent,

|

||||

} from '../src/queries/official-roadmap-topic';

|

||||

|

||||

const __filename = fileURLToPath(import.meta.url);

|

||||

const __dirname = path.dirname(__filename);

|

||||

|

||||

const args = process.argv.slice(2);

|

||||

const secret = args

|

||||

.find((arg) => arg.startsWith('--secret='))

|

||||

?.replace('--secret=', '');

|

||||

if (!secret) {

|

||||

throw new Error('Secret is required');

|

||||

}

|

||||

|

||||

let roadmapJsonCache: Map<string, OfficialRoadmapDocument> = new Map();

|

||||

export async function fetchRoadmapJson(

|

||||

roadmapId: string,

|

||||

): Promise<OfficialRoadmapDocument> {

|

||||

if (roadmapJsonCache.has(roadmapId)) {

|

||||

return roadmapJsonCache.get(roadmapId)!;

|

||||

}

|

||||

|

||||

const response = await fetch(

|

||||

`https://roadmap.sh/api/v1-official-roadmap/${roadmapId}`,

|

||||

);

|

||||

|

||||

if (!response.ok) {

|

||||

throw new Error(

|

||||

`Failed to fetch roadmap json: ${response.statusText} for ${roadmapId}`,

|

||||

);

|

||||

}

|

||||

|

||||

const data = await response.json();

|

||||

if (data.error) {

|

||||

throw new Error(

|

||||

`Failed to fetch roadmap json: ${data.error} for ${roadmapId}`,

|

||||

);

|

||||

}

|

||||

|

||||

roadmapJsonCache.set(roadmapId, data);

|

||||

return data;

|

||||

}

|

||||

|

||||

export async function syncContentToDatabase(

|

||||

topics: SyncToDatabaseTopicContent[],

|

||||

) {

|

||||

const response = await fetch(

|

||||

`https://roadmap.sh/api/v1-sync-official-roadmap-topics`,

|

||||

{

|

||||

method: 'POST',

|

||||

headers: {

|

||||

'Content-Type': 'application/json',

|

||||

},

|

||||

body: JSON.stringify({

|

||||

topics,

|

||||

secret,

|

||||

}),

|

||||

},

|

||||

);

|

||||

|

||||

if (!response.ok) {

|

||||

const error = await response.json();

|

||||

throw new Error(

|

||||

`Failed to sync content to database: ${response.statusText} ${JSON.stringify(error, null, 2)}`,

|

||||

);

|

||||

}

|

||||

|

||||

return response.json();

|

||||

}

|

||||

|

||||

// Directory containing the roadmaps

|

||||

const ROADMAP_CONTENT_DIR = path.join(__dirname, '../src/data/roadmaps');

|

||||

const allRoadmaps = await fs.readdir(ROADMAP_CONTENT_DIR);

|

||||

|

||||

const editorRoadmapIds = new Set<string>();

|

||||

for (const roadmapId of allRoadmaps) {

|

||||

const roadmapFrontmatterDir = path.join(

|

||||

ROADMAP_CONTENT_DIR,

|

||||

roadmapId,

|

||||

`${roadmapId}.md`,

|

||||

);

|

||||

const roadmapFrontmatterRaw = await fs.readFile(

|

||||

roadmapFrontmatterDir,

|

||||

'utf-8',

|

||||

);

|

||||

const { data } = matter(roadmapFrontmatterRaw);

|

||||

|

||||

const roadmapFrontmatter = data as RoadmapFrontmatter;

|

||||

if (roadmapFrontmatter.renderer === 'editor') {

|

||||

editorRoadmapIds.add(roadmapId);

|

||||

}

|

||||

}

|

||||

|

||||

for (const roadmapId of editorRoadmapIds) {

|

||||

try {

|

||||

const roadmap = await fetchRoadmapJson(roadmapId);

|

||||

|

||||

const files = await fs.readdir(

|

||||

path.join(ROADMAP_CONTENT_DIR, roadmapId, 'content'),

|

||||

);

|

||||

|

||||

console.log(`🚀 Starting ${files.length} files for ${roadmapId}`);

|

||||

const topics: SyncToDatabaseTopicContent[] = [];

|

||||

|

||||

for (const file of files) {

|

||||

const isContentFile = file.endsWith('.md');

|

||||

if (!isContentFile) {

|

||||

console.log(`🚨 Skipping ${file} because it is not a content file`);

|

||||

continue;

|

||||

}

|

||||

|

||||

const nodeSlug = file.replace('.md', '');

|

||||

if (!nodeSlug) {

|

||||

console.error(`🚨 Node id is required: ${file}`);

|

||||

continue;

|

||||

}

|

||||

|

||||

const nodeId = nodeSlug.split('@')?.[1];

|

||||

if (!nodeId) {

|

||||

console.error(`🚨 Node id is required: ${file}`);

|

||||

continue;

|

||||

}

|

||||

|

||||

const node = roadmap.nodes.find((node) => node.id === nodeId);

|

||||

if (!node) {

|

||||

console.error(`🚨 Node not found: ${file}`);

|

||||

continue;

|

||||

}

|

||||

|

||||

const filePath = path.join(

|

||||

ROADMAP_CONTENT_DIR,

|

||||

roadmapId,

|

||||

'content',

|

||||

`${nodeSlug}.md`,

|

||||

);

|

||||

|

||||

const fileExists = await fs

|

||||

.stat(filePath)

|

||||

.then(() => true)

|

||||

.catch(() => false);

|

||||

if (!fileExists) {

|

||||

console.log(`🚨 File not found: ${filePath}`);

|

||||

continue;

|

||||

}

|

||||

|

||||

const content = await fs.readFile(filePath, 'utf8');

|

||||

const html = markdownToHtml(content, false);

|

||||

const rootHtml = parse(html);

|

||||

|

||||

let ulWithLinks: HTMLElement | undefined;

|

||||

rootHtml.querySelectorAll('ul').forEach((ul) => {

|

||||

const listWithJustLinks = Array.from(ul.querySelectorAll('li')).filter(

|

||||

(li) => {

|

||||

const link = li.querySelector('a');

|

||||

return link && link.textContent?.trim() === li.textContent?.trim();

|

||||

},

|

||||

);

|

||||

|

||||

if (listWithJustLinks.length > 0) {

|

||||

// @ts-expect-error - TODO: fix this

|

||||

ulWithLinks = ul;

|

||||

}

|

||||

});

|

||||

|

||||

const listLinks: SyncToDatabaseTopicContent['resources'] =

|

||||

ulWithLinks !== undefined

|

||||

? Array.from(ulWithLinks.querySelectorAll('li > a'))

|

||||

.map((link) => {

|

||||

const typePattern = /@([a-z.]+)@/;

|

||||

let linkText = link.textContent || '';

|

||||

const linkHref = link.getAttribute('href') || '';

|

||||

let linkType = linkText.match(typePattern)?.[1] || 'article';

|

||||

linkType = allowedOfficialRoadmapTopicResourceType.includes(

|

||||

linkType as any,

|

||||

)

|

||||

? linkType

|

||||

: 'article';

|

||||

|

||||

linkText = linkText.replace(typePattern, '');

|

||||

|

||||

if (!linkText || !linkHref) {

|

||||

return null;

|

||||

}

|

||||

|

||||

return {

|

||||

title: linkText,

|

||||

url: linkHref,

|

||||

type: linkType as AllowedOfficialRoadmapTopicResourceType,

|

||||

};

|

||||

})

|

||||

.filter((link) => link !== null)

|

||||

.sort((a, b) => {

|

||||

const order = [

|

||||

'official',

|

||||

'opensource',

|

||||

'article',

|

||||

'video',

|

||||

'feed',

|

||||

];

|

||||

return order.indexOf(a!.type) - order.indexOf(b!.type);

|

||||

})

|

||||

: [];

|

||||

|

||||

const title = rootHtml.querySelector('h1');

|

||||

ulWithLinks?.remove();

|

||||

title?.remove();

|

||||

|

||||

const allParagraphs = rootHtml.querySelectorAll('p');

|

||||

if (listLinks.length > 0 && allParagraphs.length > 0) {

|

||||

// to remove the view more see more from the description

|

||||

const lastParagraph = allParagraphs[allParagraphs.length - 1];

|

||||

lastParagraph?.remove();

|

||||

}

|

||||

|

||||

const htmlStringWithoutLinks = rootHtml.toString();

|

||||

const description = htmlToMarkdown(htmlStringWithoutLinks);

|

||||

|

||||

const updatedDescription =

|

||||

`# ${title?.textContent}\n\n${description}`.trim();

|

||||

|

||||

const label = node?.data?.label as string;

|

||||

if (!label) {

|

||||

console.error(`🚨 Label is required: ${file}`);

|

||||

continue;

|

||||

}

|

||||

|

||||

topics.push({

|

||||

roadmapSlug: roadmapId,

|

||||

nodeId,

|

||||

description: updatedDescription,

|

||||

resources: listLinks,

|

||||

});

|

||||

}

|

||||

|

||||

await syncContentToDatabase(topics);

|

||||

console.log(

|

||||

`✅ Synced ${topics.length} topics to database for ${roadmapId}`,

|

||||

);

|

||||

} catch (error) {

|

||||

console.error(error);

|

||||

process.exit(1);

|

||||

}

|

||||

}

|

||||

@@ -3,6 +3,7 @@ import path from 'node:path';

|

||||

import { fileURLToPath } from 'node:url';

|

||||

import { slugify } from '../src/lib/slugger';

|

||||

import type { OfficialRoadmapDocument } from '../src/queries/official-roadmap';

|

||||

import type { OfficialRoadmapTopicContentDocument } from '../src/queries/official-roadmap-topic';

|

||||

|

||||

const __filename = fileURLToPath(import.meta.url);

|

||||

const __dirname = path.dirname(__filename);

|

||||

@@ -19,36 +20,6 @@ if (!roadmapSlug || roadmapSlug === '__default__') {

|

||||

}

|

||||

|

||||

console.log(`🚀 Starting ${roadmapSlug}`);

|

||||

export const allowedOfficialRoadmapTopicResourceType = [

|

||||

'roadmap',

|

||||

'official',

|

||||

'opensource',

|

||||

'article',

|

||||

'course',

|

||||

'podcast',

|

||||

'video',

|

||||

'book',

|

||||

'feed',

|

||||

] as const;

|

||||

export type AllowedOfficialRoadmapTopicResourceType =

|

||||

(typeof allowedOfficialRoadmapTopicResourceType)[number];

|

||||

|

||||

export type OfficialRoadmapTopicResource = {

|

||||

_id?: string;

|

||||

type: AllowedOfficialRoadmapTopicResourceType;

|

||||

title: string;

|

||||

url: string;

|

||||

};

|

||||

|

||||

export interface OfficialRoadmapTopicContentDocument {

|

||||

_id?: string;

|

||||

roadmapSlug: string;

|

||||

nodeId: string;

|

||||

description: string;

|

||||

resources: OfficialRoadmapTopicResource[];

|

||||

createdAt: Date;

|

||||

updatedAt: Date;

|

||||

}

|

||||

|

||||

export async function roadmapTopics(

|

||||

roadmapId: string,

|

||||

|

||||

@@ -8,16 +8,20 @@ import { htmlToMarkdown } from '../src/lib/html';

|

||||

import {

|

||||

allowedOfficialRoadmapTopicResourceType,

|

||||

type AllowedOfficialRoadmapTopicResourceType,

|

||||

type OfficialRoadmapTopicContentDocument,

|

||||

type OfficialRoadmapTopicResource,

|

||||

} from './sync-content-to-repo';

|

||||

type SyncToDatabaseTopicContent,

|

||||

} from '../src/queries/official-roadmap-topic';

|

||||

|

||||

const __filename = fileURLToPath(import.meta.url);

|

||||

const __dirname = path.dirname(__filename);

|

||||

|

||||

const args = process.argv.slice(2);

|

||||

const allFiles = args?.[0]?.replace('--files=', '');

|

||||

const secret = args?.[1]?.replace('--secret=', '');

|

||||

const allFiles = args

|

||||

.find((arg) => arg.startsWith('--files='))

|

||||

?.replace('--files=', '');

|

||||

const secret = args

|

||||

.find((arg) => arg.startsWith('--secret='))

|

||||

?.replace('--secret=', '');

|

||||

|

||||

if (!secret) {

|

||||

throw new Error('Secret is required');

|

||||

}

|

||||

@@ -35,12 +39,16 @@ export async function fetchRoadmapJson(

|

||||

);

|

||||

|

||||

if (!response.ok) {

|

||||

throw new Error(`Failed to fetch roadmap json: ${response.statusText}`);

|

||||

throw new Error(

|

||||

`Failed to fetch roadmap json: ${response.statusText} for ${roadmapId}`,

|

||||

);

|

||||

}

|

||||

|

||||

const data = await response.json();

|

||||

if (data.error) {

|

||||

throw new Error(`Failed to fetch roadmap json: ${data.error}`);

|

||||

throw new Error(

|

||||

`Failed to fetch roadmap json: ${data.error} for ${roadmapId}`,

|

||||

);

|

||||

}

|

||||

|

||||

roadmapJsonCache.set(roadmapId, data);

|

||||

@@ -48,15 +56,15 @@ export async function fetchRoadmapJson(

|

||||

}

|

||||

|

||||

export async function syncContentToDatabase(

|

||||

topics: Omit<

|

||||

OfficialRoadmapTopicContentDocument,

|

||||

'createdAt' | 'updatedAt' | '_id'

|

||||

>[],

|

||||

topics: SyncToDatabaseTopicContent[],

|

||||

) {

|

||||

const response = await fetch(

|

||||

`https://roadmap.sh/api/v1-sync-official-roadmap-topics`,

|

||||

{

|

||||

method: 'POST',

|

||||

headers: {

|

||||

'Content-Type': 'application/json',

|

||||

},

|

||||

body: JSON.stringify({

|

||||

topics,

|

||||

secret,

|

||||

@@ -65,24 +73,30 @@ export async function syncContentToDatabase(

|

||||

);

|

||||

|

||||

if (!response.ok) {

|

||||

const error = await response.json();

|

||||

throw new Error(

|

||||

`Failed to sync content to database: ${response.statusText}`,

|

||||

`Failed to sync content to database: ${response.statusText} ${JSON.stringify(error, null, 2)}`,

|

||||

);

|

||||

}

|

||||

|

||||

return response.json();

|

||||

}

|

||||

|

||||

const files = allFiles.split(' ');

|

||||

console.log(`🚀 Starting ${files.length} files`);

|

||||

const files =

|

||||

allFiles

|

||||

?.split(',')

|

||||

.map((file) => file.trim())

|

||||

.filter(Boolean) || [];

|

||||

if (files.length === 0) {

|

||||

console.log('No files to sync');

|

||||

process.exit(0);

|

||||

}

|

||||

|

||||

console.log(`🚀 Starting ${files.length} files`);

|

||||

const ROADMAP_CONTENT_DIR = path.join(__dirname, '../src/data/roadmaps');

|

||||

|

||||

try {

|

||||

const topics: Omit<

|

||||

OfficialRoadmapTopicContentDocument,

|

||||

'createdAt' | 'updatedAt' | '_id'

|

||||

>[] = [];

|

||||

const topics: SyncToDatabaseTopicContent[] = [];

|

||||

|

||||

for (const file of files) {

|

||||

const isContentFile = file.endsWith('.md') && file.includes('content/');

|

||||

@@ -124,6 +138,15 @@ try {

|

||||

`${nodeSlug}.md`,

|

||||

);

|

||||

|

||||

const fileExists = await fs

|

||||

.stat(filePath)

|

||||

.then(() => true)

|

||||

.catch(() => false);

|

||||

if (!fileExists) {

|

||||

console.log(`🚨 File not found: ${filePath}`);

|

||||

continue;

|

||||

}

|

||||

|

||||

const content = await fs.readFile(filePath, 'utf8');

|

||||

const html = markdownToHtml(content, false);

|

||||

const rootHtml = parse(html);

|

||||

@@ -143,7 +166,7 @@ try {

|

||||

}

|

||||

});

|

||||

|

||||

const listLinks: Omit<OfficialRoadmapTopicResource, '_id'>[] =

|

||||

const listLinks: SyncToDatabaseTopicContent['resources'] =

|

||||

ulWithLinks !== undefined

|

||||

? Array.from(ulWithLinks.querySelectorAll('li > a'))

|

||||

.map((link) => {

|

||||

|

||||

@@ -1,45 +1,46 @@

|

||||

---

|

||||

import { DateTime } from 'luxon';

|

||||

import type { ChangelogFileType } from '../../lib/changelog';

|

||||

import ChangelogImages from '../ChangelogImages';

|

||||

import type { ChangelogDocument } from '../../queries/changelog';

|

||||

|

||||

interface Props {

|

||||

changelog: ChangelogFileType;

|

||||

changelog: ChangelogDocument;

|

||||

}

|

||||

|

||||

const { changelog } = Astro.props;

|

||||

const { frontmatter } = changelog;

|

||||

|

||||

const formattedDate = DateTime.fromISO(frontmatter.date).toFormat(

|

||||

const formattedDate = DateTime.fromISO(changelog.createdAt).toFormat(

|

||||

'dd LLL, yyyy',

|

||||

);

|

||||

---

|

||||

|

||||

<div class='relative mb-6' id={changelog.id}>

|

||||

<span

|

||||

class='absolute -left-6 top-2 h-2 w-2 shrink-0 rounded-full bg-gray-300'

|

||||

<div class='relative mb-6' id={changelog._id}>

|

||||

<span class='absolute top-2 -left-6 h-2 w-2 shrink-0 rounded-full bg-gray-300'

|

||||

></span>

|

||||

|

||||

<div class='mb-3 flex flex-col sm:flex-row items-start sm:items-center gap-0.5 sm:gap-2'>

|

||||

<div

|

||||

class='mb-3 flex flex-col items-start gap-0.5 sm:flex-row sm:items-center sm:gap-2'

|

||||

>

|

||||

<span class='shrink-0 text-xs tracking-wide text-gray-400'>

|

||||

{formattedDate}

|

||||

</span>

|

||||

<span class='truncate text-base font-medium text-balance'>

|

||||

{changelog.frontmatter.title}

|

||||

{changelog.title}

|

||||

</span>

|

||||

</div>

|

||||

|

||||

<div class='rounded-xl border bg-white p-6'>

|

||||

{frontmatter.images && (

|

||||

<div class='mb-5 hidden sm:block -mx-6'>

|

||||

<ChangelogImages images={frontmatter.images} client:load />

|

||||

</div>

|

||||

)}

|

||||

{

|

||||

changelog.images && (

|

||||

<div class='-mx-6 mb-5 hidden sm:block'>

|

||||

<ChangelogImages images={changelog.images} client:load />

|

||||

</div>

|

||||

)

|

||||

}

|

||||

|

||||

<div

|

||||

class='prose prose-sm prose-h2:mt-3 prose-h2:text-lg prose-h2:font-medium prose-p:mb-0 prose-blockquote:font-normal prose-blockquote:text-gray-500 prose-ul:my-0 prose-ul:rounded-lg prose-ul:bg-gray-100 prose-ul:px-4 prose-ul:py-4 prose-ul:pl-7 prose-img:mt-0 prose-img:rounded-lg [&>blockquote>p]:mt-0 [&>ul>li]:my-0 [&>ul>li]:mb-1 [&>ul]:mt-3'

|

||||

>

|

||||

<changelog.Content />

|

||||

</div>

|

||||

class='prose prose-sm [&_li_p]:my-0 prose-h2:mt-3 prose-h2:text-lg prose-h2:font-medium prose-p:mb-0 prose-blockquote:font-normal prose-blockquote:text-gray-500 prose-ul:my-0 prose-ul:rounded-lg prose-ul:bg-gray-100 prose-ul:px-4 prose-ul:py-4 prose-ul:pl-7 prose-img:mt-0 prose-img:rounded-lg [&>blockquote>p]:mt-0 [&>ul]:mt-3 [&>ul>li]:my-0 [&>ul>li]:mb-1'

|

||||

set:html={changelog.description}

|

||||

/>

|

||||

</div>

|

||||

</div>

|

||||

|

||||

@@ -1,9 +1,9 @@

|

||||

---

|

||||

import { getAllChangelogs } from '../lib/changelog';

|

||||

import { listChangelog } from '../queries/changelog';

|

||||

import { DateTime } from 'luxon';

|

||||

import AstroIcon from './AstroIcon.astro';

|

||||

const allChangelogs = await getAllChangelogs();

|

||||

const top10Changelogs = allChangelogs.slice(0, 10);

|

||||

|

||||

const changelogs = await listChangelog({ limit: 10 });

|

||||

---

|

||||

|

||||

<div class='border-t bg-white py-6 text-left sm:py-16 sm:text-center'>

|

||||

@@ -17,7 +17,7 @@ const top10Changelogs = allChangelogs.slice(0, 10);

|

||||

Actively Maintained

|

||||

</p>

|

||||

<p

|

||||

class='mb-2 mt-1 text-sm leading-relaxed text-gray-600 sm:my-2 sm:my-5 sm:text-lg'

|

||||

class='mt-1 mb-2 text-sm leading-relaxed text-gray-600 sm:my-5 sm:text-lg'

|

||||

>

|

||||

We are always improving our content, adding new resources and adding

|

||||

features to enhance your learning experience.

|

||||

@@ -25,27 +25,27 @@ const top10Changelogs = allChangelogs.slice(0, 10);

|

||||

|

||||

<div class='relative mt-2 text-left sm:mt-8'>

|

||||

<div

|

||||

class='absolute inset-y-0 left-[120px] hidden w-px -translate-x-[0.5px] translate-x-[5.75px] bg-gray-300 sm:block'

|

||||

class='absolute inset-y-0 left-[120px] hidden w-px translate-x-[5.75px] bg-gray-300 sm:block'

|

||||

>

|

||||

</div>

|

||||

<ul class='relative flex flex-col gap-4 py-4'>

|

||||

{

|

||||

top10Changelogs.map((changelog) => {

|

||||

changelogs.map((changelog) => {

|

||||

const formattedDate = DateTime.fromISO(

|

||||

changelog.frontmatter.date,

|

||||

changelog.createdAt,

|

||||

).toFormat('dd LLL, yyyy');

|

||||

return (

|

||||

<li class='relative'>

|

||||

<a

|

||||

href={`/changelog#${changelog.id}`}

|

||||

href={`/changelog#${changelog._id}`}

|

||||

class='flex flex-col items-start sm:flex-row sm:items-center'

|

||||

>

|

||||

<span class='shrink-0 pr-0 text-right text-sm tracking-wide text-gray-400 sm:w-[120px] sm:pr-4'>

|

||||

{formattedDate}

|

||||

</span>

|

||||

<span class='hidden h-3 w-3 shrink-0 rounded-full bg-gray-300 sm:block' />

|

||||

<span class='text-balance text-base font-medium text-gray-900 sm:pl-8'>

|

||||

{changelog.frontmatter.title}

|

||||

<span class='text-base font-medium text-balance text-gray-900 sm:pl-8'>

|

||||

{changelog.title}

|

||||

</span>

|

||||

</a>

|

||||

</li>

|

||||

@@ -55,7 +55,7 @@ const top10Changelogs = allChangelogs.slice(0, 10);

|

||||

</ul>

|

||||

</div>

|

||||

<div

|

||||

class='mt-2 flex flex-col gap-2 sm:flex-row sm:mt-8 sm:items-center sm:justify-center'

|

||||

class='mt-2 flex flex-col gap-2 sm:mt-8 sm:flex-row sm:items-center sm:justify-center'

|

||||

>

|

||||

<a

|

||||

href='/changelog'

|

||||

@@ -66,7 +66,7 @@ const top10Changelogs = allChangelogs.slice(0, 10);

|

||||

<button

|

||||

data-guest-required

|

||||

data-popup='login-popup'

|

||||

class='flex flex-row items-center gap-2 rounded-lg border border-black bg-white px-4 py-2 text-sm text-black transition-all hover:bg-black hover:text-white sm:rounded-full sm:pl-4 sm:pr-5 sm:text-base'

|

||||

class='flex flex-row items-center gap-2 rounded-lg border border-black bg-white px-4 py-2 text-sm text-black transition-all hover:bg-black hover:text-white sm:rounded-full sm:pr-5 sm:pl-4 sm:text-base'

|

||||

>

|

||||

<AstroIcon icon='bell' class='h-5 w-5' />

|

||||

Subscribe for Notifications

|

||||

|

||||

@@ -1,13 +1,14 @@

|

||||

import { ChevronLeft, ChevronRight } from 'lucide-react';

|

||||

import React, { useState, useEffect, useCallback } from 'react';

|

||||

import type { ChangelogImage } from '../queries/changelog';

|

||||

|

||||

interface ChangelogImagesProps {

|

||||

images: { [key: string]: string };

|

||||

images: ChangelogImage[];

|

||||

}

|

||||

|

||||

const ChangelogImages: React.FC<ChangelogImagesProps> = ({ images }) => {

|

||||

const [enlargedImage, setEnlargedImage] = useState<string | null>(null);

|

||||

const imageArray = Object.entries(images);

|

||||

const imageArray = images.map((image) => [image.title, image.url]);

|

||||

|

||||

const handleImageClick = (src: string) => {

|

||||

setEnlargedImage(src);

|

||||

@@ -63,10 +64,10 @@ const ChangelogImages: React.FC<ChangelogImagesProps> = ({ images }) => {

|

||||

alt={title}

|

||||

className="h-[120px] w-full object-cover object-left-top"

|

||||

/>

|

||||

<span className="absolute group-hover:opacity-0 inset-0 bg-linear-to-b from-transparent to-black/40" />

|

||||

<span className="absolute inset-0 bg-linear-to-b from-transparent to-black/40 group-hover:opacity-0" />

|

||||

|

||||

<div className="absolute font-medium inset-x-0 top-full group-hover:inset-y-0 flex items-center justify-center px-2 text-center text-xs bg-black/50 text-white py-0.5 opacity-0 group-hover:opacity-100 cursor-pointer">

|

||||

<span className='bg-black py-0.5 rounded-sm px-1'>{title}</span>

|

||||

<div className="absolute inset-x-0 top-full flex cursor-pointer items-center justify-center bg-black/50 px-2 py-0.5 text-center text-xs font-medium text-white opacity-0 group-hover:inset-y-0 group-hover:opacity-100">

|

||||

<span className="rounded-sm bg-black px-1 py-0.5">{title}</span>

|

||||

</div>

|

||||

</div>

|

||||

))}

|

||||

@@ -82,7 +83,7 @@ const ChangelogImages: React.FC<ChangelogImagesProps> = ({ images }) => {

|

||||

className="max-h-[90%] max-w-[90%] rounded-xl object-contain"

|

||||

/>

|

||||

<button

|

||||

className="absolute left-4 top-1/2 -translate-y-1/2 transform rounded-full bg-white/50 hover:bg-white/100 p-2"

|

||||

className="absolute top-1/2 left-4 -translate-y-1/2 transform rounded-full bg-white/50 p-2 hover:bg-white/100"

|

||||

onClick={(e) => {

|

||||

e.stopPropagation();

|

||||

handleNavigation('prev');

|

||||

@@ -91,7 +92,7 @@ const ChangelogImages: React.FC<ChangelogImagesProps> = ({ images }) => {

|

||||

<ChevronLeft size={24} />

|

||||

</button>

|

||||

<button

|

||||

className="absolute right-4 top-1/2 -translate-y-1/2 transform rounded-full bg-white/50 hover:bg-white/100 p-2"

|

||||

className="absolute top-1/2 right-4 -translate-y-1/2 transform rounded-full bg-white/50 p-2 hover:bg-white/100"

|

||||

onClick={(e) => {

|

||||

e.stopPropagation();

|

||||

handleNavigation('next');

|

||||

|

||||

@@ -151,6 +151,12 @@ const groups: GroupType[] = [

|

||||

type: 'skill',

|

||||

otherGroups: ['Web Development'],

|

||||

},

|

||||

{

|

||||

title: 'Next.js',

|

||||

link: '/nextjs',

|

||||

type: 'skill',

|

||||

otherGroups: ['Web Development'],

|

||||

},

|

||||

{

|

||||

title: 'Spring Boot',

|

||||

link: '/spring-boot',

|

||||

@@ -408,6 +414,11 @@ const groups: GroupType[] = [

|

||||

link: '/data-analyst',

|

||||

type: 'role',

|

||||

},

|

||||

{

|

||||

title: 'BI Analyst',

|

||||

link: '/bi-analyst',

|

||||

type: 'role',

|

||||

},

|

||||

{

|

||||

title: 'Data Engineer',

|

||||

link: '/data-engineer',

|

||||

|

||||

@@ -1,18 +0,0 @@

|

||||

---

|

||||

title: 'AI Agents, Red Teaming Roadmaps and Community Courses'

|

||||

description: 'New roadmaps for AI Agents and Red Teaming plus access to community-generated AI courses'

|

||||

images:

|

||||

'AI Agents': 'https://assets.roadmap.sh/guest/ai-agents-roadmap-min-poii3.png'

|

||||

'Red Teaming': 'https://assets.roadmap.sh/guest/ai-red-teaming-omyvx.png'

|

||||

'AI Community Courses': 'https://assets.roadmap.sh/guest/ai-community-courses.png'

|

||||

seo:

|

||||

title: 'AI Agents, Red Teaming Roadmaps and Community Courses'

|

||||

description: ''

|

||||

date: 2025-05-12

|

||||

---

|

||||

|

||||

We added new AI roadmaps for AI Agents and Red Teaming and made our AI Tutor better with courses created by the community.

|

||||

|

||||

- We just released a new [AI Agents Roadmap](https://roadmap.sh/ai-agents) that covers how to build, design, and run smart autonomous systems.

|

||||

- There's also a new [Red Teaming Roadmap](https://roadmap.sh/ai-red-teaming) for people who want to learn about testing AI systems for weaknesses and security flaws.

|

||||

- Our [AI Tutor](https://roadmap.sh/ai) now lets you use courses made by other users. You can learn from their content or share your own learning plans.

|

||||

@@ -1,25 +0,0 @@

|

||||

---

|

||||

title: 'AI Engineer Roadmap, Leaderboards, Editor AI, and more'

|

||||

description: 'New AI Engineer Roadmap, New Leaderboards, AI Integration in Editor, and more'

|

||||

images:

|

||||

"AI Engineer Roadmap": "https://assets.roadmap.sh/guest/ai-engineer-roadmap.png"

|

||||

"Refer Others": "https://assets.roadmap.sh/guest/invite-users.png"

|

||||

"Editor AI Integration": "https://assets.roadmap.sh/guest/editor-ai-integration.png"

|

||||

"Project Status": "https://assets.roadmap.sh/guest/project-status.png"

|

||||

"Leaderboards": "https://assets.roadmap.sh/guest/new-leaderboards.png"

|

||||

seo:

|

||||

title: 'AI Engineer Roadmap, Leaderboards, Editor AI, and more'

|

||||

description: ''

|

||||

date: 2024-10-04

|

||||

---

|

||||

|

||||

We have a new AI Engineer roadmap, Contributor leaderboards, AI integration in the editor, and more.

|

||||

|

||||

- [AI Engineer Roadmap](https://roadmap.sh/ai-engineer) is now live

|

||||

- You can now refer others to join roadmap.sh

|

||||

- AI integration [in the editor](https://draw.roadmap.sh) to help you create and edit roadmaps faster

|

||||

- New [Leaderboards](/leaderboard) for contributors and people who refer others

|

||||

- [Projects pages](/frontend/projects) now show the status of each project

|

||||

- Bug fixes and performance improvements

|

||||

|

||||

ML Engineer roadmap and team dashboards are coming up next. Stay tuned!

|

||||

@@ -1,20 +0,0 @@

|

||||

---

|

||||

title: 'AI Quiz Summaries, New Go Roadmap, and YouTube Videos'

|

||||

description: 'Personalized AI summaries for quizzes, completely redrawn Go roadmap, and new YouTube videos for AI features'

|

||||

images:

|

||||

'AI Quiz Summary': 'https://assets.roadmap.sh/guest/quiz-summary-gnwel.png'

|

||||

'New Go Roadmap': 'https://assets.roadmap.sh/guest/roadmapsh_golang-rqzoc.png'

|

||||

'YouTube Videos': 'https://assets.roadmap.sh/guest/youtube-videos-4kygb.jpeg'

|

||||

'Roadmap Editor': 'https://assets.roadmap.sh/guest/ai-inside-editor.png'

|

||||

seo:

|

||||

title: 'AI Quiz Summaries, New Go Roadmap, and YouTube Videos'

|

||||

description: ''

|

||||

date: 2025-07-23

|

||||

---

|

||||

|

||||

We've added new AI-powered features and improved our content to help you learn more effectively.

|

||||

|

||||

- [AI quizzes](/ai/quiz) now provide personalized AI-generated summaries at the end, along with tailored course and guide suggestions to help you continue learning.

|

||||

- The [Go roadmap](/golang) has been completely redrawn with better quality and a more language-focused approach, replacing the previous version that was too web development oriented.

|

||||

- We now have [8 new YouTube videos](https://www.youtube.com/playlist?list=PLkZYeFmDuaN38LRfCSdAkzWVtTXMJt11A) covering all our AI features to help you make the most of them.

|

||||

- [Roadmap editor](/account/roadmaps) now allows you to generate a base roadmap from a prompt.

|

||||

@@ -1,22 +0,0 @@

|

||||

---

|

||||

title: 'AI Roadmaps Improved, Schedule Learning Time'

|

||||

description: 'AI Roadmaps are now deeper and we have added a new feature to schedule learning time'

|

||||

images:

|

||||

"AI Roadmaps Depth": "https://assets.roadmap.sh/guest/3-level-roadmaps-lotx1.png"

|

||||

"Schedule Learning Time": "https://assets.roadmap.sh/guest/schedule-learning-time.png"

|

||||

"Schedule Learning Time Modal": "https://assets.roadmap.sh/guest/schedule-learning-time-2.png"

|

||||

seo:

|

||||

title: 'AI Roadmaps Improved, Schedule Learning Time'

|

||||

description: ''

|

||||

date: 2024-11-18

|

||||

---

|

||||

|

||||

We have improved our AI roadmaps, added a way to schedule learning time, and made some site wide bug fixes and improvements. Here are the details:

|

||||

|

||||

- [AI generated roadmaps](https://roadmap.sh/ai) are now 3 levels deep giving you more detailed information. We have also improved the quality of the generated roadmaps.

|

||||

- Schedule learning time on your calendar for any roadmap. Just click on the "Schedule Learning Time" button and select the time slot you want to block.

|

||||

- You can now dismiss the sticky roadmap progress indicator at the bottom of any roadmap.

|

||||

- We have added some new Project Ideas to our [Frontend Roadmap](/frontend/projects).

|

||||

- Bug fixes and performance improvements

|

||||

|

||||

We have a new Engineering Manager Roadmap coming this week. Stay tuned!

|

||||

@@ -1,18 +0,0 @@

|

||||

---

|

||||

title: 'AI Tutor, C++ and Java Roadmaps'

|

||||

description: 'We just launched our first paid SQL course'

|

||||

images:

|

||||

'AI Tutor': 'https://assets.roadmap.sh/guest/ai-tutor-xwth3.png'

|

||||

'Java Roadmap': 'https://assets.roadmap.sh/guest/new-java-roadmap-t7pkk.png'

|

||||

'C++ Roadmap': 'https://assets.roadmap.sh/guest/new-cpp-roadmap.png'

|

||||

seo:

|

||||

title: 'AI Tutor, C++ and Java Roadmaps'

|

||||

description: ''

|

||||

date: 2025-04-03

|

||||

---

|

||||

|

||||

We have revised the C++ and Java roadmaps and introduced an AI tutor to help you learn anything.

|

||||

|

||||

- We just launched an [AI Tutor](https://roadmap.sh/ai), just give it a topic, pick a difficulty level and it will generate a personalized study plan for you. There is a map view, quizzes an embedded chat to help you along the way.

|

||||

- [C++ roadmap](https://roadmap.sh/cpp) has been revised with improved content

|

||||

- We have also redrawn the [Java roadmap](https://roadmap.sh/java) from scratch, replacing the deprecated items, adding new content and improving the overall structure.

|

||||

@@ -1,23 +0,0 @@

|

||||

---

|

||||

title: 'Revamped roadmaps, AI Tutor, Guides, Roadmaps and more'

|

||||

description: 'Revamped roadmaps, AI Tutor, AI Guides and AI Roadmaps'

|

||||

images:

|

||||

'Roadmap AI Tutor': 'https://assets.roadmap.sh/guest/floating-ai-tutor-n1o4n.png'

|

||||

'AI Tutor on Roadmap': 'https://assets.roadmap.sh/guest/dedicated-roadmap-chat-page-qlbfw.png'

|

||||

'AI Tutor': 'https://assets.roadmap.sh/guest/global-chat-evm4v.png'

|

||||

'Topics AI Tutor': 'https://assets.roadmap.sh/guest/roadmap-ai-tutor-89if2.png'

|

||||

'AI Guides and Roadmaps': 'https://assets.roadmap.sh/guest/ai-guides-roadmaps-lxmm3.jpeg'

|

||||

seo:

|

||||

title: 'Revamped roadmaps, AI Tutor, AI Guides and AI Roadmaps'

|

||||

description: ''

|

||||

date: 2025-06-27

|

||||

---

|

||||

|

||||

We've revamped some of our older roadmaps and added new AI features to help you learn better.

|

||||

|

||||

- Roadmap pages now have a [floating AI Tutor](/frontend) that can help you learn anything while following the roadmap.

|

||||

- There is a new [dedicated AI Tutor page](/frontend/ai) for each roadmap, where you can learn any topic of the roadmap, save history and more.

|

||||

- With [global chat](/ai/chat), you can ask any question about your career, upload your resume, get guidance, tips, roadmap suggestions and more.

|

||||

- Topic pages of roadmaps now have a new [AI Tutor](/frontend) that can help you learn the topic, get quizzed on the topic and more.

|

||||

- Apart from courses, you can now [generate AI Guides and AI Roadmaps](/ai) for any topic.

|

||||

- [MongoDB](/mongodb), [Kubernetes](/kubernetes), [Spring Boot](/spring-boot), [Linux](/linux), [React Native](/react-native) and [GraphQL](/graphql) roadmaps have been updated.

|

||||

@@ -1,21 +0,0 @@

|

||||

---

|

||||

title: 'Cloudflare and ASP.NET Roadmaps, New Dashboard'

|

||||

description: 'We just launched our first paid SQL course'

|

||||

images:

|

||||

'New Dashboard': 'https://assets.roadmap.sh/guest/new-dashboard.png'

|

||||

'Cloudflare Roadmap': 'https://assets.roadmap.sh/guest/cloudflare-roadmap.png'

|

||||

'ASP.NET Roadmap Revised': 'https://assets.roadmap.sh/guest/aspnet-core-revision.png'

|

||||

seo:

|

||||

title: 'Cloudflare and ASP.NET Roadmaps, New Dashboard'

|

||||

description: ''

|

||||

date: 2025-02-21

|

||||

---

|

||||

|

||||

We have launched a new Cloudflare roadmap, revised ASP.NET Core roadmap and introduced a new dashboard design.

|

||||

|

||||

- Brand new [Cloudflare roadmap](https://roadmap.sh/cloudflare) to help you learn Cloudflare

|

||||

- [ASP.NET Core roadmap](https://roadmap.sh/aspnet-core) has been revised with new content

|

||||

- Fresh new dashboard design with improved navigation and performance

|

||||

- Bug fixes and performance improvements

|

||||

|

||||

|

||||

@@ -1,22 +0,0 @@

|

||||

---

|

||||

title: 'DevOps Project Ideas, Team Dashboard, Redis Content'

|

||||

description: 'New Project Ideas for DevOps, Team Dashboard, Redis Content'

|

||||

images:

|

||||

"DevOps Project Ideas": "https://assets.roadmap.sh/guest/devops-project-ideas.png"

|

||||

"Redis Resources": "https://assets.roadmap.sh/guest/redis-resources.png"

|

||||

"Team Dashboard": "https://assets.roadmap.sh/guest/team-dashboard.png"

|

||||

seo:

|

||||

title: 'DevOps Project Ideas, Team Dashboard, Redis Content'

|

||||

description: ''

|

||||

date: 2024-10-16

|

||||

---

|

||||

|

||||

We have added 21 new project ideas to our DevOps roadmap, added content to Redis roadmap and introduced a new team dashboard for teams

|

||||

|

||||

- Practice your skills with [21 newly added DevOps Project Ideas](https://roadmap.sh/devops)

|

||||

- We have a new [Dashboard for teams](https://roadmap.sh/teams) to track their team activity.

|

||||

- [Redis roadmap](https://roadmap.sh/redis) now comes with learning resources.

|

||||

- Watch us [interview Bruno Simon](https://www.youtube.com/watch?v=IQK9T05BsOw) about his journey as a creative developer.

|

||||

- Bug fixes and performance improvements

|

||||

|

||||

ML Engineer roadmap and team dashboards are coming up next. Stay tuned!

|

||||

@@ -1,27 +0,0 @@

|

||||

---

|

||||

title: 'New Dashboard, Leaderboards and Projects'

|

||||

description: 'New leaderboard page showing the most active users'

|

||||

images:

|

||||

"Personal Dashboard": "https://assets.roadmap.sh/guest/personal-dashboard.png"

|

||||

"Projects Page": "https://assets.roadmap.sh/guest/projects-page.png"

|

||||

"Leaderboard": "https://assets.roadmap.sh/guest/leaderboard.png"

|

||||

seo:

|

||||

title: 'Leaderboard Page - roadmap.sh'

|

||||

description: ''

|

||||

date: 2024-09-17

|

||||

---

|

||||

|

||||

Focus for this week was improving the user experience and adding new features to the platform. Here are the highlights:

|

||||

|

||||

- New dashboard for logged-in users

|

||||

- New leaderboard page

|

||||

- Projects page listing all projects

|

||||

- Ability to stop a started project

|

||||

- Frontend and backend content improvements

|

||||

- Bug fixes

|

||||

|

||||

[Dashboard](/) would allow logged-in users to keep track of their bookmarks, learning progress, project and more. You can still visit the [old homepage](https://roadmap.sh/home) once you login.

|

||||

|

||||

We also launched a new [leaderboard page](/leaderboard) showing the most active users, users who completed most projects and more.

|

||||

|

||||

There is also a [new projects page](/projects) where you can see all the projects you have been working on. You can also now stop a started project.

|

||||

@@ -1,21 +0,0 @@

|

||||

---

|

||||

title: 'PHP and System Design Roadmaps, Get Featured'

|

||||

description: 'New PHP Roadmap, System Design Roadmap Revamped, and more'

|

||||

images:

|

||||

"PHP Roadmap": "https://assets.roadmap.sh/guest/php-roadmap.png"

|

||||

"Engineering Manager Roadmap": "https://assets.roadmap.sh/guest/engineering-manager-roadmap.png"

|

||||

"System Design Roadmap": "https://assets.roadmap.sh/guest/system-design.png"

|

||||

"Feature Custom Roadmaps": "https://assets.roadmap.sh/guest/apply-for-feature.png"

|

||||

seo:

|

||||

title: 'PHP and System Design Roadmaps, Get Featured'

|

||||

description: ''

|

||||

date: 2024-12-21

|

||||

---

|

||||

|

||||

We have a new PHP roadmap, System Design roadmap has been revamped. You can also get your custom roadmaps featured.

|

||||

|

||||

- One of the most requested [PHP Roadmap](https://roadmap.sh/php) is now live

|

||||

- We have a new [Engineering Manager](https://roadmap.sh/engineering-manager) roadmap

|

||||

- We have revamped [System Design Roadmap](https://roadmap.sh/system-design)

|

||||

- Get your custom roadmaps featured on the [community roadmaps](/community)

|

||||

- Bug fixes and performance improvements

|

||||

@@ -1,25 +0,0 @@

|

||||

---

|

||||

title: 'Redis Roadmap, Dashboard Changes, Bookmarks'

|

||||

description: 'New leaderboard page showing the most active users'

|

||||

images:

|

||||

"Redis Roadmap": "https://assets.roadmap.sh/guest/redis-roadmap.png"

|

||||

"Bookmarks": "https://assets.roadmap.sh/guest/bookmark-roadmaps.png"

|

||||

"Dashboard": "https://assets.roadmap.sh/guest/dashboard-profile.png"

|

||||

"Cyber Security Content": "https://assets.roadmap.sh/guest/cyber-security-content.png"

|

||||

seo:

|

||||

title: 'Redis Roadmap, Dashboard, Bookmarks - roadmap.sh'

|

||||

description: ''

|

||||

date: 2024-09-23

|

||||

---

|

||||

|

||||

We have a new roadmap, some improvements to dashboard, bookmarks and more.

|

||||

|

||||

- [Redis roadmap](https://roadmap.sh/redis) is now live

|

||||

- Progress and Bookmarks on dashboard are merged into a single section which makes it easy if you have a lot of bookmarks.

|

||||

- Profile section on [dashboard](/) makes it easy to create profile

|

||||

- Roadmaps can now be [bookmarked from any page](/roadmaps) that shows roadmaps.

|

||||

- User profile now shows the projects you are working on.

|

||||

- [Cyber Security roadmap](/cyber-security) is now filled with new content and resources.

|

||||

- Buf fixes and improvements to some team features.

|

||||

|

||||

Next up, we are working on a new AI Engineer roadmap and teams dashboards.

|

||||

@@ -1,19 +0,0 @@

|

||||

---

|

||||

title: "Our first paid course about SQL is live"

|

||||

description: 'We just launched our first paid SQL course'

|

||||

images:

|

||||

"SQL Course": "https://assets.roadmap.sh/guest/course-environment-87jg8.png"

|

||||

"Course Creator Platform": "https://assets.roadmap.sh/guest/course-creator-platform.png"

|

||||

seo:

|

||||

title: 'SQL Course is Live'

|

||||

description: ''

|

||||

date: 2025-02-04

|

||||

---

|

||||

|

||||

After months of work, I am excited to announce our [brand-new SQL course](/courses/sql) designed to help you master SQL with a hands-on, practical approach!

|

||||

|

||||

For the past few months, we have been working on building a course platform that would not only help us deliver high-quality educational content but also sustain the development of roadmap.sh. This SQL course is our first step in that direction.

|

||||

|

||||

The course has been designed with a focus on practical learning. Each topic is accompanied by hands-on exercises, real-world examples, and an integrated coding environment where you can practice what you learn. The AI integration provides personalized learning assistance, helping you grasp concepts better and faster.

|

||||

|

||||

Check out the course at [roadmap.sh/courses/sql](https://roadmap.sh/courses/sql)

|

||||

@@ -1,198 +0,0 @@

|

||||

---

|

||||

title: 'Is Data Science a Good Career? Advice From a Pro'

|

||||

description: 'Is data science a good career choice? Learn from a professional about the benefits, growth potential, and how to thrive in this exciting field.'

|

||||

authorId: fernando

|

||||

excludedBySlug: '/ai-data-scientist/career-path'

|

||||

seo:

|

||||

title: 'Is Data Science a Good Career? Advice From a Pro'

|

||||

description: 'Is data science a good career choice? Learn from a professional about the benefits, growth potential, and how to thrive in this exciting field.'

|

||||

ogImageUrl: 'https://assets.roadmap.sh/guest/is-data-science-a-good-career-10j3j.jpg'

|

||||

isNew: false

|

||||

type: 'textual'

|

||||

date: 2025-01-28

|

||||

sitemap:

|

||||

priority: 0.7

|

||||

changefreq: 'weekly'

|

||||

tags:

|

||||

- 'guide'

|

||||

- 'textual-guide'

|

||||

- 'guide-sitemap'

|

||||

---

|

||||

|

||||

|

||||

|

||||

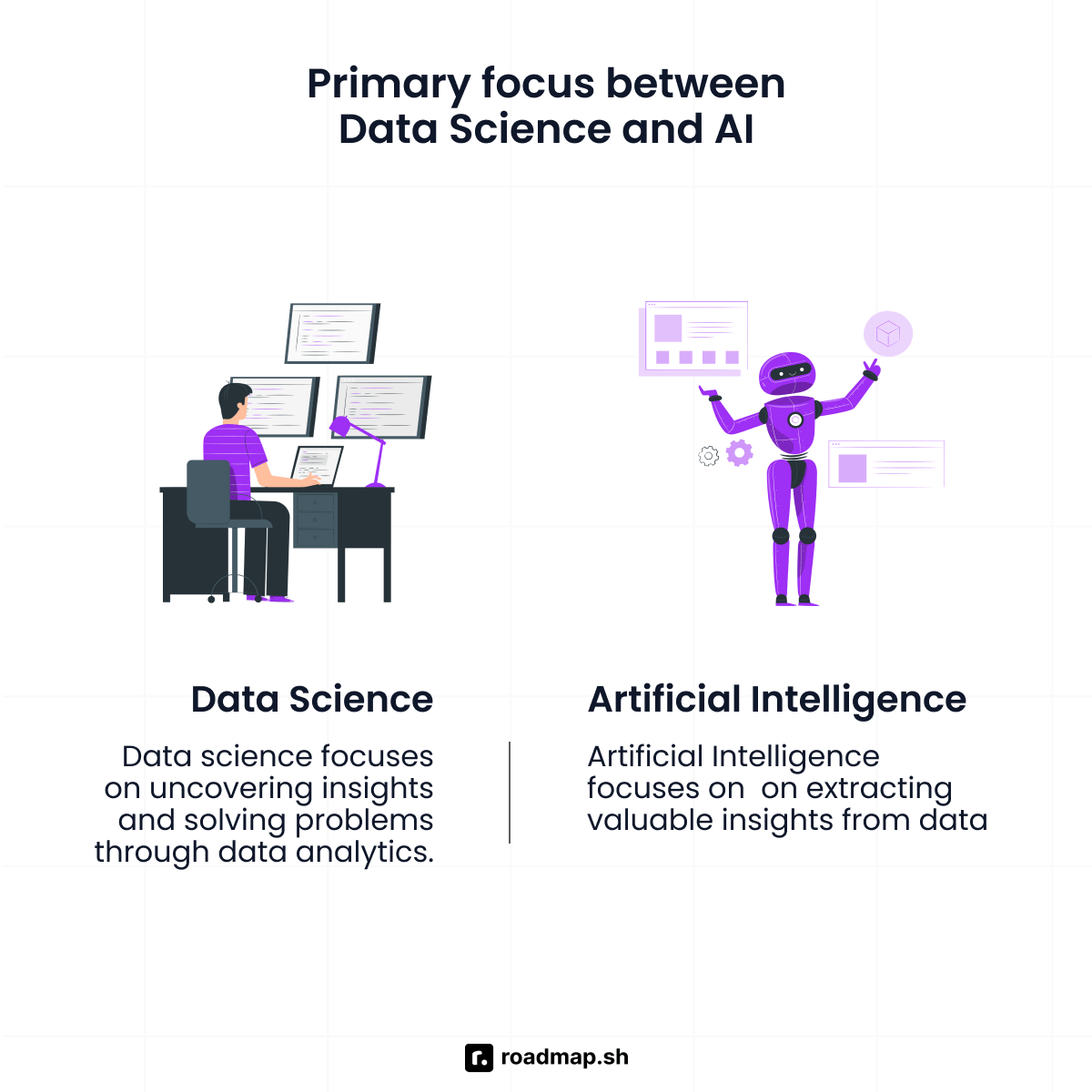

Data science is one of the most talked-about career paths today, but is it the right fit for you?

|

||||

|

||||

With [data science](https://roadmap.sh/ai-data-scientist) at the intersection of technology, creativity, and impact, it can be a very appealing role. It definitely promises high and competitive salaries, and the chance to solve real-world problems. Who would say “no” to that?\!

|

||||

|

||||

But is it the right fit for your skills and aspirations?

|

||||

|

||||

In this guide, I’ll help you uncover the answer to that question by understanding the pros and cons of working as a data scientist. I’ll also look at what the data scientists’ salaries are like and the type of skills you’d need to have to succeed at the job.

|

||||

|

||||

Now sit down, relax, and read carefully, because I’m about to help you answer the question of “Is data science a good career for me?”.

|

||||

|

||||

## Pros of a Career in Data Science

|

||||

|

||||

|

||||

|

||||

There are plenty of “pros” when it comes to picking data science as your career, but let’s take a closer look at the main ones.

|

||||

|

||||

### High Demand and Job Security

|

||||

|

||||

The demand for data scientists has grown exponentially over the past few years and shows no signs of slowing down. According to the [U.S. Bureau of Labor Statistics](https://www.bls.gov/ooh/math/data-scientists.htm), the data science job market is projected to grow by 36% from 2023 to 2033, far outpacing the average for other fields.

|

||||

|

||||

This surge is partly due to the “explosion” of artificial intelligence, particularly tools like ChatGPT, in recent years, which have amplified the need for skilled data scientists to handle complex machine learning models and big data analysis.

|

||||

|

||||

### Competitive Salaries

|

||||

|

||||

One of the most appealing aspects of data science positions is the average data scientist’s salary. Reports from Glassdoor and Indeed highlight that data scientists are among the highest-paid professionals in the technology sector. For example, the national average salary for a data scientist in the United States is approximately $120,000 annually, with experienced professionals earning significantly more.

|

||||

|

||||

These salaries are a reflection of the reality: the high demand for [data science skills](https://roadmap.sh/ai-data-scientist/skills) and the technical expertise required for these roles are not easy to come by. What’s even more, companies in high-cost regions, such as Silicon Valley, New York City, and Seattle, tend to offer premium salaries to attract top talent.

|

||||

|

||||

The financial rewards in this field are usually complemented by additional benefits such as opportunities for professional development like research, publishing, patent registration, etc.

|

||||

|

||||

### Intellectual Challenge and Learning Opportunities

|

||||

|

||||

Data scientists work in a field that demands continuous learning and adaptation to emerging technologies. Their field is rooted in solving complex problems through a combination of technical knowledge, creativity, and critical thinking. In other words, they rarely have any time to get bored.

|

||||

|

||||

What makes data science important and intellectually rewarding, is its ability to address real-world problems. Whether it's optimizing healthcare systems, enhancing customer experiences in retail, or predicting financial risks, data science applications have a tangible impact on people.

|

||||

|

||||

This makes data science a good career for individuals who are passionate about lifelong learning and intellectual stimulation.

|

||||

|

||||

### Versatility

|

||||

|

||||

Data science is a good career choice for those who enjoy variety and flexibility. One of the unique aspects of a career in data science is its ability to reach across various industries and domains (I’m talking technology, healthcare, finance, e-commerce, and even entertainment to name a few). This means data scientists can apply their data science skills in almost any sector that generates or relies on data—which is virtually all industries today.

|

||||

|

||||

## Cons of a Career in Data Science

|

||||

|

||||

|

||||

|

||||

The data science job is not without its “cons”, after all, there is no “perfect” role out there. Let’s now review some of the potential challenges that come with the role.

|

||||

|

||||

### Steep Learning Curve

|

||||

|

||||

The steep learning curve in data science is one of the field’s defining characteristics. New data scientists have to develop a deep understanding of technical skills, including proficiency in programming languages like Python, R, and SQL, as well as tools for machine learning and data visualization.

|

||||

|

||||

On top of the already complex subjects to master, data scientists need to find ways of staying current with the constant advancements in the field. This is not optional; it’s a necessity for anyone trying to achieve long-term success in data science. This constant evolution can feel overwhelming, especially for newcomers who are also learning foundational skills.

|

||||

|

||||

Despite these challenges, the steep learning curve can be incredibly rewarding for those who are passionate about solving problems, making data-driven decisions, and contributing to impactful projects.

|

||||

|

||||

While it might sound harsh, it’s important to note that the dedication required to overcome these challenges often results in a fulfilling and (extremely) lucrative career in the world of data science.

|

||||

|

||||

### High Expectations

|

||||

|

||||

Data science positions come with high expectations from organizations. Data scientists usually have the huge responsibility of delivering actionable insights and ensuring these insights are both accurate and timely.

|

||||

|

||||

One of the key challenges data science professionals face is managing the pressure to deliver results under tight deadlines (they’re always tight). Stakeholders often expect instant answers to complex problems, which can lead to unrealistic demands.

|

||||

|

||||

To succeed in such environments, skilled data scientists need strong communication skills to explain their findings and set realistic expectations with stakeholders.

|

||||

|

||||

### Potential Burnout

|

||||

|

||||

The high demand for data science skills usually translates into heavy workloads and tight deadlines, particularly for data scientists working on high-stakes projects (working extra hours is also not an uncommon scenario).

|

||||

|

||||

Data scientists frequently juggle multiple complex responsibilities, such as modeling data, developing machine learning algorithms, and conducting statistical analysis—often within limited timeframes.

|

||||

|

||||

The intense focus required for these tasks, combined with overlapping priorities (and a small dose of poor project management), can lead to mental fatigue and stress.

|

||||

|

||||

Work-life balance can also be a challenge for data scientists giving them another reason for burnout. Combine that with highly active industries, like finance and you have a very hard-to-balance combination.

|

||||

|

||||

To mitigate the risk of burnout, data scientists can try to prioritize setting boundaries, managing workloads effectively (when that’s an option), and advocating for clearer role definitions (better separation of concerns).

|

||||

|

||||

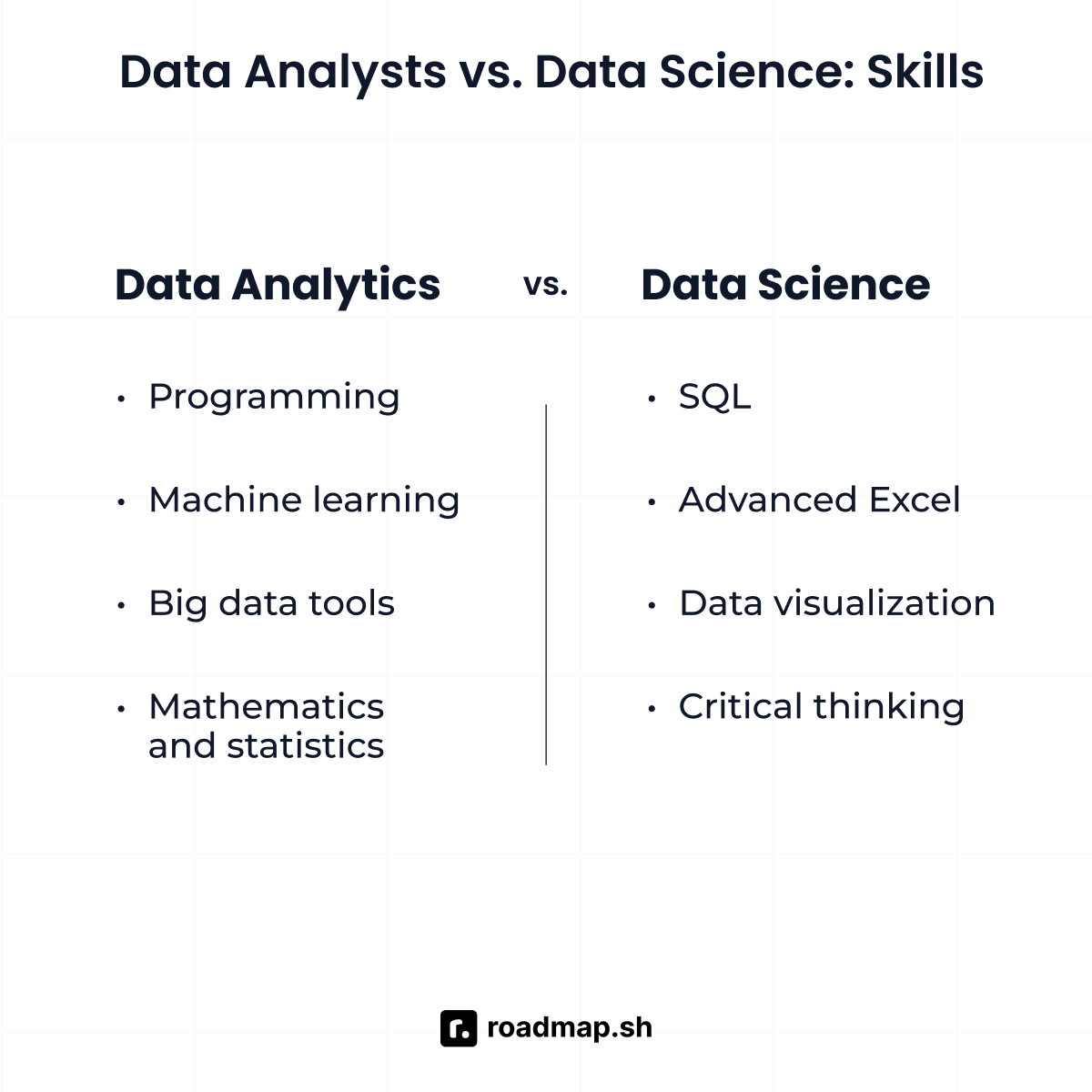

## Skills Required for a Data Science Career

|

||||

|

||||

|

||||

|

||||

To develop a successful career in data science, not all of your [skills](https://roadmap.sh/ai-data-scientist/skills) need to be technical, you also have to look at soft skills, and domain knowledge and to have a mentality of lifelong learning.

|

||||

|

||||

Let’s take a closer look.

|

||||

|

||||

### Technical Skills

|

||||

|

||||

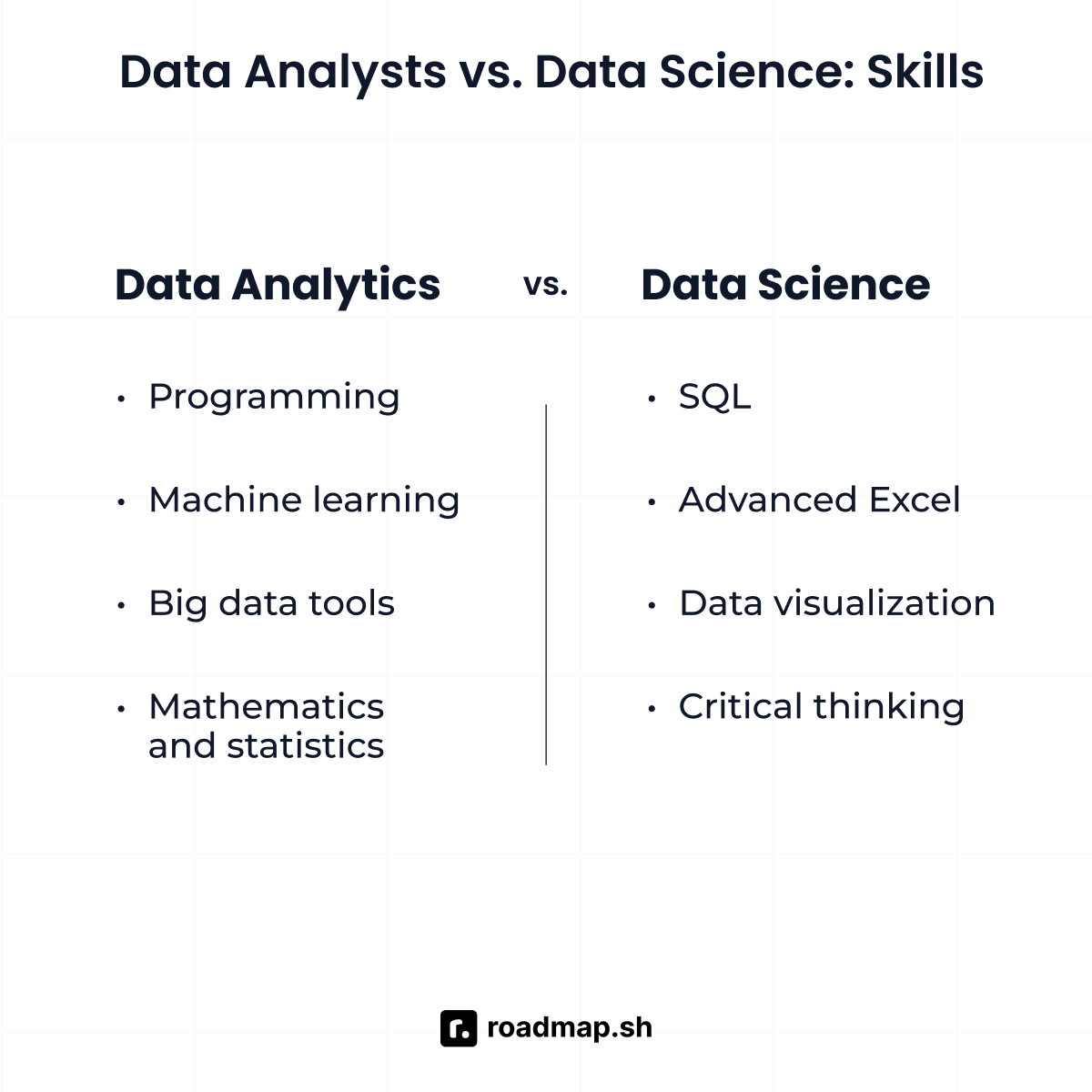

The field of data requires strong foundational technical skills. At the core of these skills is proficiency in programming languages such as Python, R, and SQL. Python is particularly useful and liked for its versatility and extensive libraries, while SQL is essential for querying and managing database systems. R remains a popular choice for statistical analysis and data visualization.

|

||||

|

||||

In terms of frameworks, look into TensorFlow, PyTorch, or Scikit-learn. They’re all crucial for building predictive models and implementing artificial intelligence solutions. Tools like Tableau, Power BI, and Matplotlib are fantastic for creating clear and effective data visualizations, which play a significant role in presenting actionable insights.

|

||||

|

||||

### Soft Skills

|

||||

|

||||

As I said before, it’s not all about technical skills. Data scientists must develop their soft skills, this is key in the field.

|

||||

|

||||

From problem-solving and analytical thinking to developing your communication skills and your ability to collaborate with others. They all work together to help you communicate complex insights and results to other, non-technical stakeholders, which is going to be a key activity in your day-to-day life.

|

||||

|

||||

### Domain Knowledge

|

||||

|

||||

While technical and soft skills are essential, domain knowledge often distinguishes exceptional data scientists from the rest. Understanding industry-specific contexts—such as healthcare regulations, financial market trends, or retail customer behavior—enables data scientists to deliver tailored insights that directly address business needs. If you understand your problem space, you understand the needs of your client and the data you’re dealing with.

|

||||

|

||||

Getting that domain knowledge often involves on-the-job experience, targeted research, or additional certifications.

|

||||

|

||||

### Lifelong Learning

|

||||

|

||||

Finally, if you’re going to be a data scientist, you’ll need to embrace a mindset of continuous learning. The field evolves rapidly, with emerging technologies, tools, and methodologies reshaping best practices. Staying competitive requires consistent professional development through online courses, certifications, conferences, and engagement with the broader data science community.

|

||||

|

||||

Lifelong learning is not just a necessity but also an opportunity to remain excited and engaged in a dynamic and rewarding career.

|

||||

|

||||

## How to determine if data science is right for you?

|

||||

|

||||

|

||||

|

||||

How can you tell if you’ll actually enjoy working as a data scientist? Even after reading this far, you might still have some doubts. So in this section, I’m going to look at some ways in which you can validate that you’ll enjoy the job of a data scientist before you go through the process of becoming one.

|

||||

|

||||

### Self-Assessment Questions

|

||||

|

||||